User experience is more than user opinion

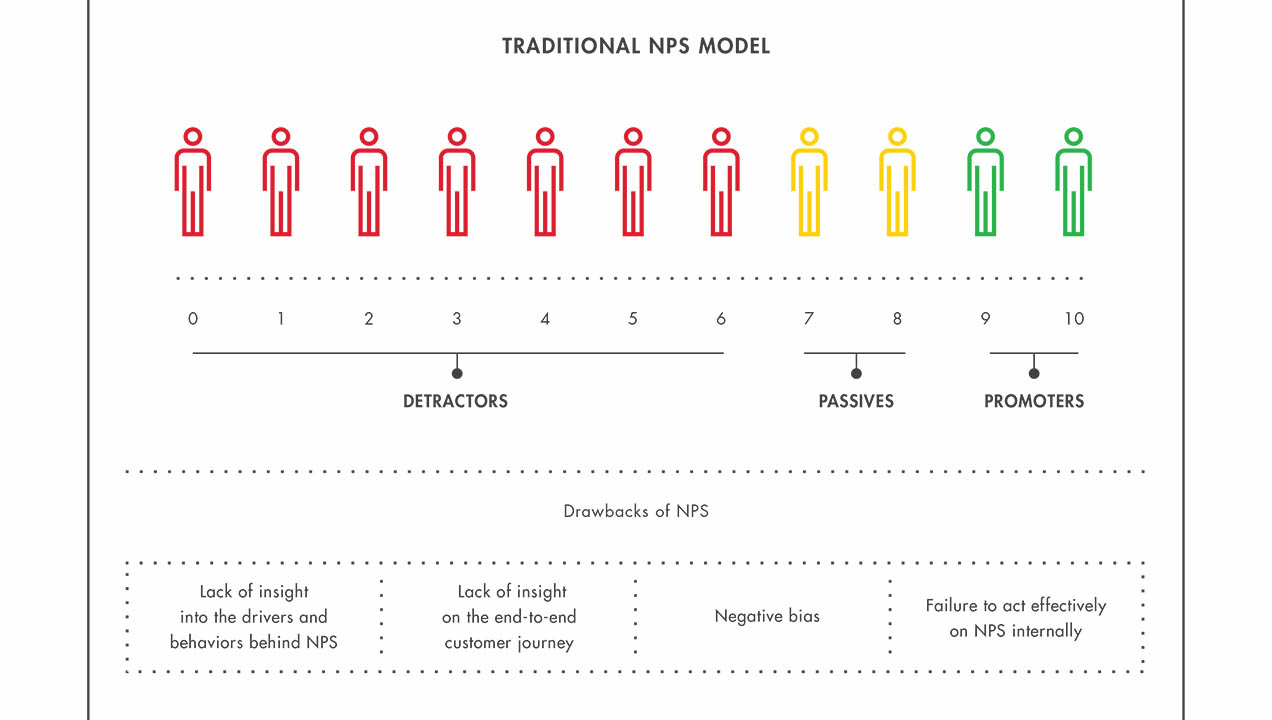

As a reflection of how important customer experience is to businesses, it is now common practice to use opinion measurement tools such as Net Promoter Scores (NPS) and Customer Satisfaction Scores (CSAT) to track it.

Both these measures produce simple scores that are easy to understand and track, which makes them very valuable. However, there’s more to customer experience than just asking for people’s opinion of it. The reason for this is based in fundamental aspects of human make-up, such as:

What we do and say may differ.

It’s possible to hold conflicting ideas simultaneously, which can result in self-justification and rationalization rather than a straightforward account of a decision. For example, we may create a post-purchase justification for a treat we can’t really afford. This is called “cognitive dissonance”, a phenomenon that has often been studied by psychologists. This means that what customers report after an event may be the result of post-event rationalization rather than what they felt at the time. It’s relevant to their experience, but may not give you the insights that you need to improve it.

Our memory doesn’t act like a digital recorder.

Our minds don’t absorb and store everything we experience for instant recall. There’s simply too much information to capture it all, so we perceive the visual world for example through rapid scanning of the eye, called saccades, and our minds fill in the gaps. This is why visual illusions work. This means that when we recall any experience, we’re recalling our mind’s construct of it, which may not be accurate and can change in small ways each time we recall it. The memory of an experience will capture the important bits, but not the detail, which may be important.

We are naturally susceptible to bias.

As memory is a construct, we can be influenced in how we recall an experience by how we’re asked about it. For example, in one study accident witnesses were asked how fast those involved were going. Those asked how fast they were going when they hit each other said about 34mph, but those asked how fast they were going when they smashed into each other said 41 mph. Their memories also made up new information, as 32% of those hearing the more emotive question reported seeing broken glass, whereas only 14% of the others did. The language we use and our approach to questions can make a real difference to the response we get, so it’s easy to introduce unintended bias into questionnaires.

We may be biased and not even realize it.

Biases naturally affect our decisions and behavior and can affect how we rate a particular experience. A classic of this type is known as the Above Average Effect, which involves a person rating themselves as above average. You can get the idea if you try to think of how many people you know would rate themselves as having a below average driving ability or sense of humor. This can be compounded by the Bias Blind Spot, which is a phenomenon whereby individuals fail to see the biases in their own judgment.

Our memory of an event is affected by when we are asked about it.

The immediate experience isn’t the same as memory of use. The father of behavioral economics, Daniel Kahneman, identified that an immediate experience of an event lasts in the psychological present only a few seconds, after which it becomes a memory. This means that the way customers will consider an event will vary substantially between these time points.

We don’t always understand what we’re experiencing.

Its not unusual for questionnaires to ask respondents to consider pain points and analyze their experience in some way. However it’s difficult for a questionnaire respondent to provide an accurate assessment of a process (especially issues with it) if they don’t understand it. They may overlook the significance of particular elements, or not report subtle lower-level problems that build cumulatively.

As humans we’re complex and our psychological make-up is critical in how we experience the world. NPS and CSAT are now essential business tools, but if you want to make shifts in the customer experience it’s important to go beyond these metrics. This needs to involve direct observation of behavior, using techniques such as ethnography or lab-based user testing.

The combination of techniques is powerful.

Survey tools can persuasively highlight areas of interest, as they provide something quantifiable that organizations can understand. Techniques involving direct observation can then identify insights into the drivers for behavior, the context and the detail of interaction that needs to be changed. Survey tools can then be useful again as a mechanism for validating any interaction changes that have been made.

Organizations need to do both, to track and make effective insight driven changes to experiences to see long-term improvements.